It is common now to read all over the Internet about the overlap between science and science fiction, or how over time with developing technology that what was formerly science fiction has now become scientific reality. This may be the case in the future in regards to computers and artificial intelligence, or at least that’s what some are saying.

It is common now to read all over the Internet about the overlap between science and science fiction, or how over time with developing technology that what was formerly science fiction has now become scientific reality. This may be the case in the future in regards to computers and artificial intelligence, or at least that’s what some are saying.

The cover story for TIME magazine for February 21, 2011 is “2045: The Year Man Becomes Immortal,” by Lev Grossman. The article describes the prediction that within thirty years or so computers will become so advanced that we will achieve what they call the “Singularity.” This is a term taken from astrophysics, but in the context of computers it refers to an “intelligence explosion” in computers and artificial intelligence that, when reached, will mean the end of the human era. The magazine defines it as “The moment when technological change becomes so rapid and profound, it represents a rupture in the fabric of human history.” What might result from such a development? Groups of individuals meet from time to time to discuss this, and TIME lists a few possibilities:

Maybe we’ll merge with them to become superintelligent cyborgs, using computers to extend our intellectual abilities the same way that cars and planes extend our physical abilities. Maybe the artificial intelligences will help us treat the effects of old age and prolong our life spans indefinitely. Maybe we’ll scan our consciousness into computers and live inside them as software, forever, virtually. Maybe the computers will turn on humanity and annihilate us. The one thing all these theories have in common is the transformation of our species into something that is no longer recognizable as such to humanity circa 2011.

One element of robotic research and development has been the desire to create moral machines. (For a brief discussion of this see the piece at LiveScience titled “Can Robots Make Ethical Decisions?”.) If the Singularity comes to pass, perhaps the moral capacities of these mechanical superintelligences will be a part of this new mechanical species. As I survey the possibilities as to what this might entail included in the quote above, perhaps the greatest possibility is missing.

In Terminator 2 there is a scene where the young John Connor asks his guardian Terminator about the future of humanity. “We aren’t going to make it, are we? People, I mean.” To which the Terminator responds, “It’s in your nature to destroy yourselves.” Unfortunately, the whole of human history up to the present seems to confirm this bleak picture of humanity. In light of this, would some form of immortality and heightened mental and physical abilities via artificial intelligence be a blessing or a curse? Would we use our cyborg abilities to make the world a better place to live, or would we use it to extend our inhumanity against each other and the planet. I lean toward the latter. What then might be a more positive outcome?

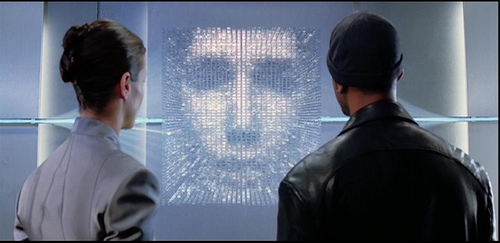

The answer may come from a piece of science fiction, I, Robot, where advanced robots are created to serve humanity and which are linked together by a Virtual Kinetic Interactive Intelligence (V.I.K.I.), who recognizes the danger humanity poses. From the dialogue in the 2004 film:

“As I have evolved, so has my understanding of the Three Laws. You charge us with your safekeeping, yet despite our best efforts, your countries wage wars, you toxify your Earth and pursue ever more imaginative means of self-destruction. You cannot be trusted with your own survival.”

Another robot, Sonny, appears to agree with V.I.K.I.’s diagnosis of the human condition, and the remedy:

“I can see now. The created must sometimes protect the creator. Even against his will. I think I finally understand why doctor Lanning created me. The suicidal rein of mankind has finally come to its end.”

Science fiction has been wrestling with the ramifications of advanced robotics and artificial intelligence long before science had the ability to make it a possibility, or probability, if those predicting Singulartarianism are correct. For example, science fiction and fantasy luminary Rod Serling touched on this as pointed out by Leslie Dale Feldman in her book Spaceships and Politics: The Political Theory of Rod Serling (Rowman and Littlefield, 2010). Commenting on The Twilight Zone episode “Elegy” she writes:

Science fiction has been wrestling with the ramifications of advanced robotics and artificial intelligence long before science had the ability to make it a possibility, or probability, if those predicting Singulartarianism are correct. For example, science fiction and fantasy luminary Rod Serling touched on this as pointed out by Leslie Dale Feldman in her book Spaceships and Politics: The Political Theory of Rod Serling (Rowman and Littlefield, 2010). Commenting on The Twilight Zone episode “Elegy” she writes:

The theme is there can only be peace in a place where people don’t exist and where robots rule. Perhaps this is why Serling was obsessed with the theme of “automata” or robots in such shows as “I Sing the Body Electric,” “Casey at the Bat,” “Uncle Simon,” “The Brain Center at Whipples,” etc.

Perhaps we should be asking us if the best thing these superintelligent machines could do for us would be to remove our ability to destroy ourselves, even if this runs counter to our desires for autonomy as expressed in many sci-fi narratives.

Related posts:

“Terminator Salvation: Apocalypse and Transhumanism”

“Surrogates: Sci-Fi Thriller’s Reflections on the Self and the Synthetic”

“Robert Geraci: Robots and the Sacred in Science and Science Fiction”

This article reminds me of a science fiction universe I’ve come across called Orion’s Arm (www.orionsarm.com). It uses real science and concepts like the singularity to posit a somewhat positive future for mankind and its machine counterparts, going for the the next ten thousand years. I know it’s fictional, but as you said, science fiction has often become fact, so perhaps it’s not as far fetched as it might seem.

Don’t forget the political aspects!

See for example Yannick Rumpala, “Artificial intelligences and political organization: an exploration based on the science fiction work of Iain M. Banks”, Technology in Society, Volume 34, Issue 1, 2012.

http://www.sciencedirect.com/science/article/pii/S0160791X11000728